I want to be careful with this one from the beginning.

Caching GraphQL query results in a Graphene-Django API can be useful.

It can also be a smell.

If a basic query takes a quarter of a second before it has done anything interesting, caching may be the fastest way to protect the product. But it is not the same thing as fixing the underlying design. It is a workaround. Sometimes a very practical one, but still a workaround.

That distinction matters because performance work has a way of turning temporary patches into architecture.

In one Django API I worked on, the stack was fairly typical for a content-heavy platform:

- Django

- Graphene-Django

- Relay-style nodes and connections

- Redis through Django's cache framework

- a lot of read-heavy GraphQL queries for menus, pages, projects, filters, topics, and other mostly stable data

The product did not need every one of those resolvers to hit the database and pass through the full GraphQL execution path on every request. Some data changed rarely. Some queries were called often. Some responses were the same for many users.

That is the kind of environment where resolver-level caching starts to look tempting.

The question that pushed this direction

There is an old Graphene issue titled "CACHE queries (Django first) (Node.Field / Generate Json file?)" where someone asked whether filters or queries could be output into cache as JSON to improve client query performance.

The issue did not turn into a blessed Graphene feature. The Graphene issue was closed as wontfix; the Graphene-Django issue was closed as a duplicate.

That outcome is useful in its own way.

It tells you something important: if you want this kind of caching, you are probably going to implement it at your application boundary. Graphene will execute the query you give it. Django will give you cache primitives. But deciding what is safe to cache, for whom, and for how long is your job.

That is as it should be.

GraphQL caching is not a library toggle. It is a correctness decision.

The actual workaround

The implementation I saw was intentionally small.

It used Django's cache backend, backed by Redis:

CACHES = {

"default": {

"BACKEND": "django_redis.cache.RedisCache",

"LOCATION": REDIS_URL,

"OPTIONS": {"CLIENT_CLASS": "django_redis.client.DefaultClient"},

}

}

Then it introduced a decorator for selected resolvers:

def resolve_if_cached_query(ttl=60 * 15):

"""

A decorator for getting cached records based on kwargs.

"""

def do_resolve_if_cached_query(func):

@functools.wraps(func)

def wrapper_func(*args, **kwargs):

cache_key = fetch_cache_key(

args[1].context.body.decode("utf-8"), func.__name__

)

cached_item = cache.get(cache_key)

if cached_item:

return cached_item

record = func(*args, **kwargs)

cache.set(cache_key, record, ttl)

return record

return wrapper_func

return do_resolve_if_cached_query

The cache key was derived from the raw GraphQL request body and the resolver name:

cache_key = fetch_cache_key(

info.context.body.decode("utf-8"),

func.__name__,

)

The hash function made the request body safe to use as a cache key:

def fetch_cache_key(content, prefix="cache"):

content_hash = make_hash_sha256(content)

return f"{prefix}__{content_hash}"

Then resolvers opted in:

class Query(graphene.ObjectType):

menu = graphene.Field(MenuType)

@staticmethod

@resolve_if_cached_query()

def resolve_menu(root, info, *args, **kwargs):

return Menu.get_solo()

The same pattern can be used for read-heavy resolvers:

@resolve_if_cached_query()

def resolve_all_topics(root, info, *args, **kwargs):

return Topic.objects.all()

The important phrase is "opted in."

This was not global GraphQL response caching. It was not middleware caching every request that happened to look similar. It was a selective escape hatch for resolvers where the team believed the data was stable enough and the cost was high enough.

That is the right level of caution.

Why this can help

Graphene-Django APIs can spend time in several places:

- database queries

- ORM object construction

- filtering and pagination

- permission checks

- resolver execution

- GraphQL parsing and validation

- serialization into the final response shape

The usual first move is to optimize database access. Use select_related, prefetch_related, pagination limits, and better querysets. That still matters.

But sometimes the database is not the whole story.

In GraphQL, even a query that returns familiar Django objects still has to go through the GraphQL execution machinery. For a content-heavy API with repeated anonymous or semi-public queries, doing that work over and over can be wasteful.

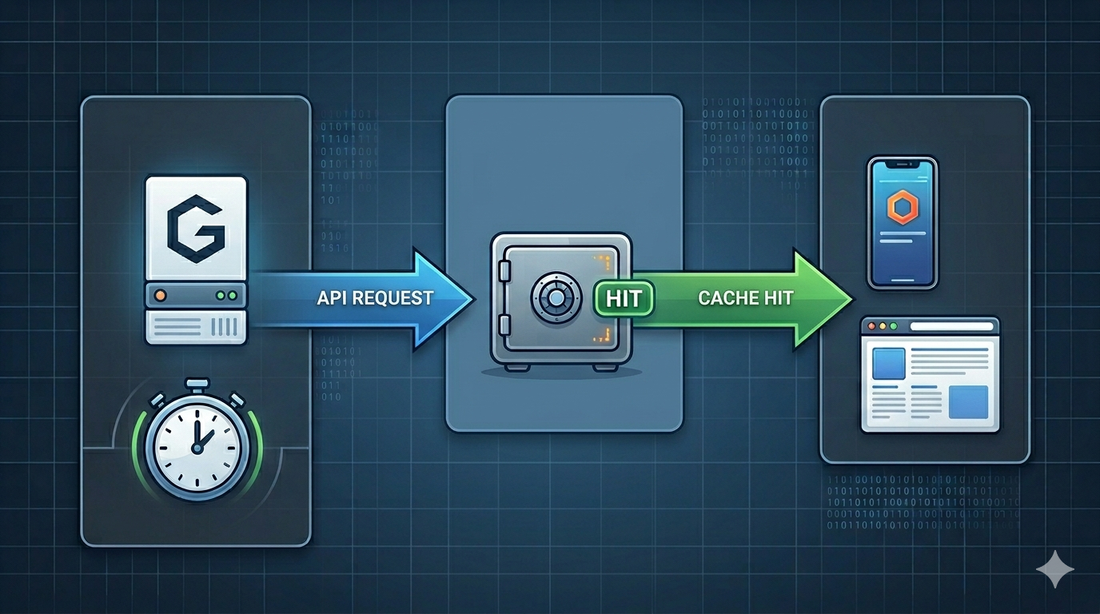

Caching at the resolver level gives you a crude but effective shortcut:

same request body

same resolver

same cache key

return cached result

For stable data like menus, footer content, public taxonomy lists, page content, roles, or dashboard options, that may be enough to remove a lot of repeated work.

Again, this is not elegant.

But production systems do not always need elegance first. Sometimes they need breathing room.

The part that should make you nervous

Caching GraphQL results is easy to get wrong because GraphQL queries are not just URLs.

The same operation can be formatted differently. Variables can change the result. The authenticated user can change the result. Permissions can change the result. Locale, tenant, preview mode, feature flags, and request headers can change the result.

If the cache key only includes the raw request body, you need to be sure the response does not also depend on something outside that body.

This is where senior engineers need to slow down.

Before putting a resolver behind this decorator, ask:

- Is the response identical for every user?

- Does the resolver depend on

info.context.user? - Does the resolver depend on permissions, tenant, locale, or request headers?

- Can mutations change this data?

- Is a 15-minute stale response acceptable?

- Does the resolver return a queryset that Graphene will evaluate later?

- Are we caching per resolver, or accidentally caching a shape that belongs to the full operation?

Those questions matter more than the decorator.

The danger is not that the cache fails. The danger is that it works and quietly serves the wrong data.

Do not cache personalized data casually

In the codebase I looked at, some cached resolvers were clearly safe candidates: menu data, topics, activities, public page sections, and other stable content.

Those are the easy cases.

The harder cases are resolvers behind authentication or staff permissions. A resolver like allAdmins or searchUser may look cacheable because many staff users ask similar questions. But if the response depends on role, organization, permissions, or active filters, the cache key must include that context.

Otherwise one user's result can become another user's response.

That is not a performance bug.

That is a data leak.

For user-specific resolvers, I would either avoid this approach or include a deliberate identity and authorization component in the cache key:

key_parts = {

"body": request_body,

"resolver": func.__name__,

"user_id": info.context.user.id,

"role": current_role,

}

Even then, I would want tests around it.

Caching public content is one thing. Caching permissioned GraphQL results is another.

Invalidation is the real design problem

The sample decorator used a TTL:

ttl=60 * 15

Fifteen minutes is a reasonable first compromise. It limits the blast radius of stale data without requiring a complex invalidation system.

But TTL-based caching is still a product decision.

If an admin updates the menu, is it acceptable for the old menu to remain visible for 15 minutes? If a project is unpublished, can it appear in search results until the cache expires? If a role changes, can permissions lag?

Sometimes the answer is yes.

Sometimes the answer is absolutely not.

When the answer is no, you need explicit invalidation. That might mean clearing keys on mutation, versioning cache keys by model update timestamps, or using narrower cache keys per object or per list.

The hard part is not putting something in Redis.

The hard part is knowing when it must leave Redis.

What I would change in a second pass

If I were refining this pattern today, I would make the cache contract more explicit.

First, I would avoid hiding too much behind "cache the request body." I would make each cached resolver declare what kind of cache it is:

@cache_public_query(ttl=900)

def resolve_menu(root, info, *args, **kwargs):

return Menu.get_solo()

The word public is doing useful work there. It tells reviewers that this resolver should not depend on identity.

Second, I would normalize the key inputs. Raw body hashing works, but it can miss semantically identical queries if formatting differs. A more robust design would include operation name, variables, resolver name, and any relevant context.

Third, I would add logging.

At minimum:

- cache hit

- cache miss

- resolver name

- TTL

- key prefix

Without observability, caching becomes superstition. You need to know whether it is helping.

Fourth, I would add tests for unsafe caching boundaries. The test I want is not only "same query returns cached data." I also want "two users with different permissions do not share a cached response."

Fifth, I would keep looking for the deeper fix.

Maybe the query needs better ORM optimization. Maybe the GraphQL schema is too broad. Maybe the frontend is asking for too much. Maybe the endpoint should be REST for that particular read path. Maybe the API should precompute a JSON document for public content.

Caching should buy time to think, not replace thinking.

About the parser question

There is a separate frustration here that is worth naming.

If the Python GraphQL execution path is a meaningful part of response time, it is fair to wonder why more of that work is not delegated to something faster, maybe a native extension around a parser written in Rust or another systems language.

Extensions are a hassle. Packaging is harder. Cross-platform support is harder. Debugging is harder.

But a quarter-second baseline cost on simple queries is also a hassle. It is just moved onto every request instead of every build.

I do not want to overstate that point. Most application teams should not start by rewriting GraphQL internals or blaming the parser. Measure first. Profile first. Fix the obvious ORM mistakes first.

But after you have done that, it is reasonable to admit that runtime choices have consequences. A pure Python stack gives you approachability and ecosystem fit. It may also give you overhead that you eventually have to work around.

That is the trade.

The mental model I use now

I would not describe resolver-level caching as a good GraphQL design.

I would describe it as a tactical pressure valve.

Use it when:

- the resolver is read-heavy

- the response is stable enough

- the data is public or safely scoped

- the stale window is acceptable

- the cache key includes every input that can affect the result

- there is a plan for invalidation or expiry

- the team has measured that caching actually helps

Avoid it when:

- the response depends on subtle permissions

- mutations need immediate consistency

- the query shape changes often

- stale data would confuse users or break workflows

- caching hides a database or schema design problem that should be fixed

The mature version of this pattern is not "cache GraphQL."

The mature version is:

Cache specific read paths with explicit correctness boundaries.

That sentence is less exciting, but much safer.

Closing thought

Graphene-Django query caching can be useful.

It can also make a system harder to reason about if it is treated as a general performance feature.

The workaround I described here is small, practical, and honest about what it is doing. It hashes the request body, uses Django's cache backend, and lets selected resolvers opt in. For stable public data, that can be enough to reduce repeated work and protect response times.

But I would not call it a clean design.

It is a tool for when the product needs relief before the architecture has been fully rethought.

And that is fine, as long as everyone remembers the difference.

Discussion